★★★★★ Exklusive Interviews

„Das bisher schönste Interview, dass es mit mir gibt“, schrieb Eva Habermann in den sozialen Medien über diesen Artikel von FILMPULS. Und dies, nachdem sie für ihre mehr als 70 Filme und Serien in den letzten 30 Jahren über 1.000 Mal interviewt wurde.[...]

„Das bisher schönste Interview, dass es mit mir gibt“, schrieb Eva Habermann in den sozialen Medien über diesen Artikel von FILMPULS. Und dies, nachdem sie für ihre mehr als 70 Filme und Serien in den letzten 30 Jahren über 1.000 Mal interviewt wurde.[...]

Seine Arbeit für Blockbuster wie «Titanic» (1997), oder «Avatar» (2009) hat Andrew «Andy» R. Jones zwei Oscars eingebracht. FILMPULS konnte mit dem Animation Director während seiner Arbeiten am neuesten Disney-Blockbuster[...]

Seine Arbeit für Blockbuster wie «Titanic» (1997), oder «Avatar» (2009) hat Andrew «Andy» R. Jones zwei Oscars eingebracht. FILMPULS konnte mit dem Animation Director während seiner Arbeiten am neuesten Disney-Blockbuster[...]

Dionys Frei und Davide Tiraboschi zählen mit Dedicam zur Speerspitze im internationalen Drohnen-Business. Ihre Karriere hat Bubenträume wahr gemacht. Im Interview mit FILMPULS erzählen die beiden Schweizer über ihre Arbeit[...]

Dionys Frei und Davide Tiraboschi zählen mit Dedicam zur Speerspitze im internationalen Drohnen-Business. Ihre Karriere hat Bubenträume wahr gemacht. Im Interview mit FILMPULS erzählen die beiden Schweizer über ihre Arbeit[...]

Filmbusiness & Entertainment

Leserfrage an Dr. Film: Ich habe eine geniale Idee für einen TV-Krimi. Leider fehlt mir die Zeit, ein Drehbuch zu schreiben – und das will ich auch nicht. Wie kann [mehr …]

Leserfrage an Dr. Film: Ich habe eine geniale Idee für einen TV-Krimi. Leider fehlt mir die Zeit, ein Drehbuch zu schreiben – und das will ich auch nicht. Wie kann [mehr …]

Gewusst wie: Video-Testimonial

Mitarbeitervideos richtig erfolgreich machen: diese 6 Punkte sind der Schlüssel dazu

Menschen investieren immer dann Aufmerksamkeit und Gefühle, wenn es um andere Menschen geht. Das ist mit ein Grund für die Kraft von Mitarbeitervideos. Dieser Artikel erklärt, auf was bei der [mehr …]

Wie du mit B-Roll Aufnahmen dein Video auf einfache Weise aufwerten kannst

Wer A sagt, muss auch B sagen. Für B-Roll Footage und Videos gilt das nur, wenn man die Anwendung dieser Art Aufnahmen auch wirklich versteht und beherrscht. Nur diesfalls entsteht [mehr …]

Videos: Bild- und Tonbearbeitung

- Fragst du einen Videotechniker, Regisseur, Cutter oder Tonmeister nach dem allerwichtigsten aller Punkte, welchen du in der Postproduktion eines Videos beachten musst, wird er dir antworten: Da gibt es hundert [mehr …]

- Sounddesign kann Atmosphäre schaffen, die Spannung erhöhen oder eine Filmszene realistischer oder abstrakter machen. Eine Sequenz, in der eine Figur durch einen Wald geht, ist viel weniger intensiv, wenn es [mehr …]

- CGI ist zu einem festen Bestandteil der Filmproduktion geworden und wird eingesetzt, um realistische Bilder zu erzeugen, die sonst unmöglich zu erreichen wären. Animationen in 2D oder 3D ermöglicht es [mehr …]

Film und Storytelling einfach erklärt

Analysen

Warum du dich als Videoproduktionsfirma mit Videos im Hochformat beschäftigen musst

Beim Marketing mit Videos und in der Kommunikation mit Bewegtbild war bisher das Querformat das Maß aller Dinge. Durch den Siegeszug der Smartphones bewegt sich der Trend nun aber klar in Richtung Hochformat. Das hat Konsequenzen. [mehr …]

Fachwissen

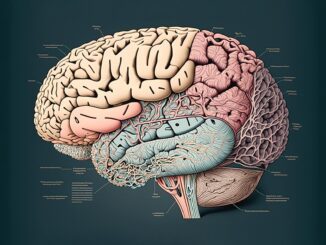

Warum, was der Mensch sieht, weit mehr ist, als was das menschliche Auge als Sinnesorgan wahrnimmt

Auge und Hirn bestimmen die Wirklichkeit, oder das, was wir dafür halten, auf faszinierende Weise mit. Beide Organe arbeiten beim Sehen weit enger und komplexer zusammen, als man das auf den ersten Blick denken möchte. [mehr …]

Fachwissen

Warum subjektive Wahrnehmung zum Film gehört wie die Butter aufs Brot

Im Zusammenhang mit der Frage, was subjektive Wahrnehmung sein kann, wurde von einer renommierten Tageszeitung behauptet, Filme seien nur wegen YouTube „gnadenlos subjektiv und gnadenlos beliebig geworden“. Das ist Käse! [mehr …]